Introducing the Intuiface Family

Intuiface Composer : The authoring tool

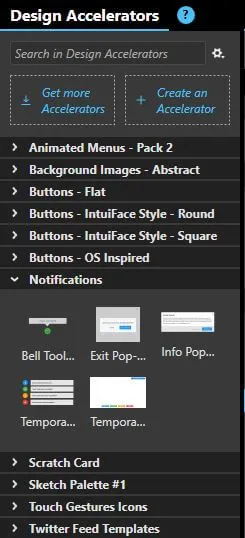

Composer is where you will create - using mouse and keyboard - your interactive experiences. An embedded version of Player (see next section) ships with Composer so you can test your work. No touch screen is required; think of your mouse as a single finger. For detailed technical specifications, click here. To see what an Intuiface project looks like under the covers, have a look at our online documentation.

Learn More About Composer 🡆.webp)

- No-code paradigm: Never write a line of code. Every capability is designed for use by even the most non-technical of users. Unleashes creativity by removing technical barriers.

- Uses existing content (images, videos, documents, 3D models, audio, etc.): No proprietary formats, no reinvention/recreation of existing design work.

- Includes dynamic components such as a web browser and maps: No “sandbox” limitations. Freedom to access and display external design work and information.

- Access to external data sources and business components: Fast “development” of interactive interfaces to existing business components. Includes API Explorer, enabling the no-coding creation of dynamic integrations with any Web API.

- Fine control of appearance and geometry: Full creative freedom. No template constraints or design restrictions.

- Triggers and actions: Extensive library with hundreds of events - and hundreds of actions in response to those events - to choose from. Again – and we’ll keep saying it - without programming.

- Introduces "value binding": Mirror property values across local and network-hosted elements to create responsive designs and always up-do-date content.

Intuiface Player: The runtime

Player is the bit of software necessary to run all of the interactive experiences you create in Composer. Well it's a little more than a "bit of software" since it's actually a virtual machine that interprets the output of the Composer and makes the whole execution feel like just-another app. By running Player in-venue on Windows, iOS (iPads only), Android, BrightSign, Chrome OS, Samsung Tizen, and Raspberry Pi, you can take advantage of remote deployment and device management. Alternatively, when deploying experiences to the web or as an app on personal mobile devices, you never even see Player. Your experiences look and act like native applications.

.webp)

- Touch and beyond: Support for touch, gesture, sensor, voice, computer vision, and more. Varies by platform and deployment option, with Windows-based devices supporting the entirety of options.

- Multi-OS support: In-venue support includes Windows, iOS (on iPads), Android, BrightSign, Samsung Tizen, LG webOS, and Chrome OS. Deployment to the web and as an app on personal mobile devices supports phones/tablets/PCs running Windows, iOS, Android, macOS, and Linux.

- Peripheral integrations: Can work with printers, barcode scanners, payment terminals, and more - all of the devices one might find connected to a kiosk.

- Inter-Player communication: Creation of multi-screen collaborative experiences. Without programming!

- Agnostic to display make/model: No display vendor lock-in. Freedom to change make/model without having to change the experience.

- White label option: Take ownership of the splash screen when an experience is automatically launched.

Headless CMS - The Content Manager

Our Headless CMS is a cloud-hosted repository enabling content managers to define, store, and manage the media and information consumed by Intuiface deployments anywhere in the world. By "headless" we mean the data structure is independent of any particular user interface. In fact, the same content "base" can be applied to multiple, visually distinct deployments, enabling Content Managers to just focus on their data.

Learn More About Headless CMS 🡆.webp)

- Create well-structured content for a variety of media formats. Includes support for file types like images, videos, and documents, plus concepts like colors and date/time.

- Use Variants to identify fields that vary by context. For example, if your experience needs to support multiple languages, don't create two separate lists, just create one list and apply variants to Text fields.

- Manage users and workflows to control the editing and publishing of data. Each base can have multiple users, but you can control what they have permission to edit. And a publishing workflow ensures data only reaches the field when ready.

- Depend on a locally-cached, synchronized copy of each base. With this local copy on every device in the field, you get lightning fast data transfer and great resiliency in the face to unreliable network connections.

- Coming Soon: Automate import from third-party enterprise data management solutions like CRMs, DAMs, DXPs, and CMSs.

- Coming Later: Complete API for reading from and writing to a Headless CMS base, which even enables the use of Headless CMS with non-Intuiface-based user interfaces.

Intuiface API Explorer: The cloud connector

API Explorer enables the no-coding creation of support for any REST-based Web Services query, opening the door to thousands of public and private APIs accessible via Web Services. That includes everything from movie listings and weather forecasts to currency conversion, the latest photos from NASA and all those connected objects among the Internet of Things. This thing is so powerful and unique that we're patenting it!

Learn More About API Explorer 🡆%20(1).webp)

- Supports entry of any request URL or curl statement; displays the contents of any XML or JSON-formatted response.

- Uses a machine learning engine to accurately type each property (is it text? currency? webpage?) and to identify the values thought most likely to be important for display in your experience.

- Automatically generates a dynamic connector (aka an "interface asset") for the specified query and creates a visualization, within your experience, of the properties you specified

- Enables the automatic display of multi-page results in a single view, avoiding the need to manually sift through each page one at a time.

- Permits on-the-fly modification of host, endpoint and query parameters to tailor your Web request for your specific needs

Intuiface Share and Deploy: The link to the outside

Accessible via our web-based Management Console - home for a variety of functions including license and purchase management - is the Share and Deploy console which makes it possible for you to manage experiences: publish them to the cloud, share them with others, and deploy them on a schedule to geographically distributed devices - all without leaving your desk. Intuiface hosts the service so there is nothing for you to install and no advanced degree required. For details, check out our Help Center article.

Learn More About Share & Deploy 🡆.webp)

- Publish experiences to the cloud: One-click upload of your projects to a free Intuiface Cloud Storage account, hosted upon an Amazon AWS infrastructure.

- Share experiences with colleagues and clients for editing: Just enter an email address. A sharing notification email is sent and Composer flags the newly shared experience as available for download.

- Share experiences with clients for review: Distribute a unique URL. Entering it in a browser on Windows, iPad, Android and Chrome will automate installation of a self-running version of your experience.

- Deploy experiences and manage Players on a schedule: Specify the time, date, and frequency of experience deployment, Player version upgrade, and more for devices located anywhere in the world. Never again walk the halls or hit the road with USB stick in hand.

- Manage your network: A real-time inventory of any Internet-accessible instance of Intuiface Player - regardless of geography - for device-level deployment of experiences.

- Access through web-based control panel: Accessible through any web browser running on any operating system. You don't even need Intuiface Composer or Player running on the same machine to use it.

- Monitor with crash recovery: For Windows PCs, restart experiences and Player itself if the remote device was rebooted. Across all supported operating systems, you can even see the active scene in the running experience on each device.

Intuiface Analytics: The measuring stick

Turn your Intuiface experience into an essential KPI resource by defining, collecting, visualizing, and sharing the data that drive design, operational, and business insights. Capture data identifying user preferences and their context (gender, weather, location, etc. ) Then build charts and dashboards to reach actionable user insights. For details, click here.

Learn More About Analytics 🡆.webp)

- Log virtually any event (e.g. scene dwell time, user action, data input, environmental info, etc.)

- Use unlimited parameters for each logged event, maximizing the richness of reported information.

- Identify sessions (coupled with improved RFID/NFC tag reader support) so you can differentiate users.

- Real time upload of event information - aka data points - to a centralized, cloud-based Hub, permitting almost immediate access to data across even the largest deployment.

- Visualize data using 30+ chart types and a powerful yet simple drag-and-drop chart editor

- Optioally forward data to third party analytics, marketing automation, and data warehousing platforms via built-in integrations for Excel, Google Analytics, Mixpanel, Segment, as well as for REST Web Service data pulls.

- Collect and share custom-built charts using our dashboard feature, ensuring that colleagues and clients have access to interactive visualizations with a real-time connection to the Data Hub, ensuring information is always up-to-date.

.webp)